News Story

A cooperative control algorithm for robotic search and rescue

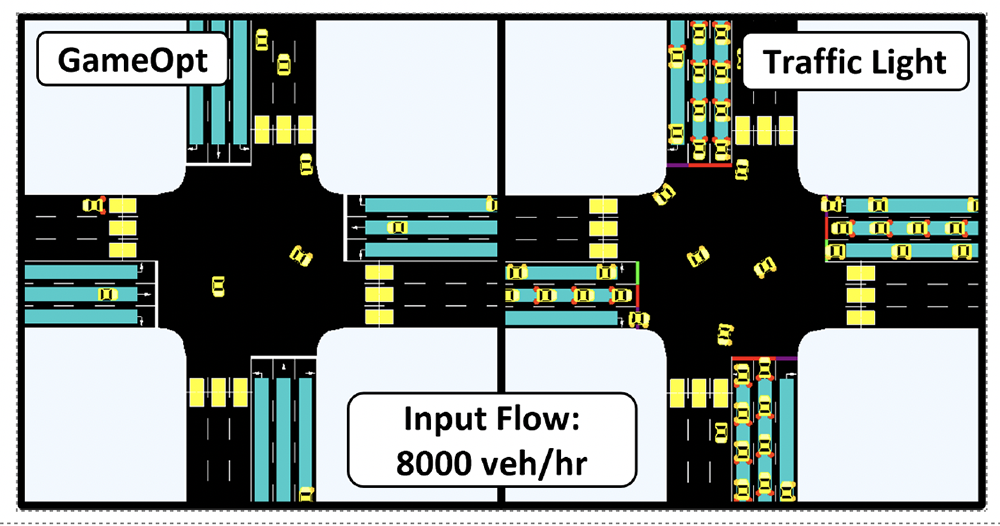

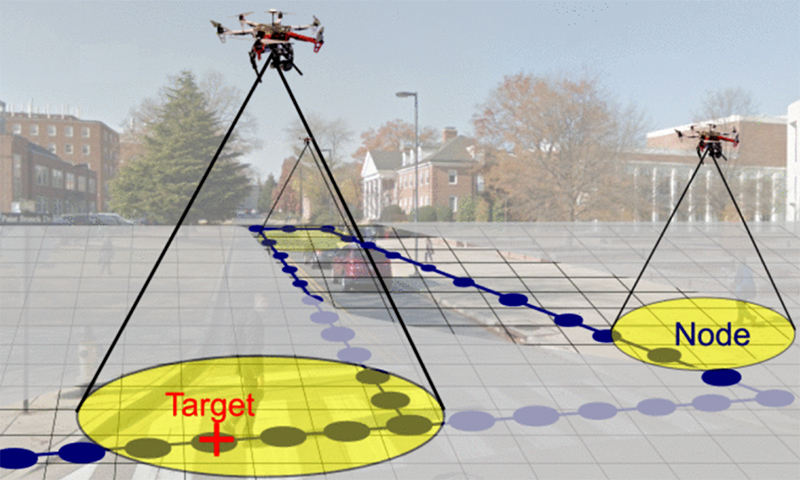

Autonomous quadrotors with noisy and range-limited sensors explore an urban environment. Their objective is to simultaneously map an unknown occupancy graph (dark blue nodes and edges) and search for a ground target (red “+” marker) moving over this graph. The occupancy graph is embedded in a larger search grid. The state of a search grid cell that contains an occupancy graph node may be occupied or unoccupied (depending on the presence or absence of a target), whereas the state of cells with no occupancy graph nodes is always void. (Fig. 1 from the paper)

In standard military reconnaissance and search and rescue missions, people map uncertain environments and search for, detect and track targets. Autonomous mobile robots also are able to perform these tasks, potentially all at the same time. Both robotic and human teams may benefit from using cooperative sampling strategies that assimilate noisy measurements to estimate the environment or location of a target and guide the collection of subsequent measurements. That is why many methods are being proposed to efficiently address the map, search, and tracking objectives independently, as well as balancing the priorities, for example, by combining searching and tracking.

In a paper published in IEEE Robotics and Automation Letters, a team of University of Maryland researchers develop a cooperative mapping and target-search algorithm for a team of autonomous quadrotors equipped with noisy, range-limited sensors. The algorithm can concurrently map and search an unknown urban area, while detecting and tracking a mobile ground target. Cooperative Mapping and Target Search over an Unknown Occupancy Graph Using Mutual Information was written by ISR/Maryland Robotics Center postdoctoral researcher Artur Wolek, Ph.D. students Sheng Cheng and Debdipta Goswami, and their advisor, Maryland Robotics Center Director Derek Paley (AE/ISR).

The paper presents a cooperative-control algorithm that guides a team of autonomous quadrotors to simultaneously build an occupancy graph of an uncertain environment and detect a single target moving on this graph.

While their approach is related to other strategies that use mutual information and information-theoretic methods to guide mapping, search and tracking, the Maryland researchers consider a variation of the problem in which the environment is represented by an occupancy graph and focus on concurrent mapping and search. Related work on surveillance and pursuit and evasion over graphs or in continuous environments provides search guarantees, but assumes perfect target and mapping sensors. Paley and colleagues extend these approaches to the case where the graph is not known initially and is instead constructed “on the fly.”

The approach constructs an occupancy graph and target state graph from noisy sensor measurements, and is able to encode past and present target locations. It includes a biased random-walk target motion model for use in a likelihood ratio detector and tracker. It comprehensively generates sampling tasks that unifies mapping and search objectives using mutual information, and develops a task-assignment strategy that assigns conflict-free and locally optimal sampling paths to each agent.

The researchers tested the feasibility of their strategy with an outdoor flight test experiment involving two real and two Monte Carlo simulated quadrotors, and used numerical simulations to compare map and search performance to non-adaptive “lawnmower” and random coverage strategies. The results suggest that using mutual information leads to nodes and targets being detected sooner when compared to the non-adaptive methods, making mutual-information-based planning appropriate for scenarios in which gathering information is time sensitive, such as in search and rescue applications.

Published March 3, 2020